"AI-assisted" is not the same as "AI-generated," and Canadian publishers are increasingly being asked to be specific about which they're publishing. The legal exposure isn't always obvious, the consumer-trust exposure is bigger than most editorial teams assume, and the structured-data tools to disclose properly are now stable. We've reviewed disclosure practice across roughly 40 Canadian publisher and brand sites this quarter — most are under-disclosing.

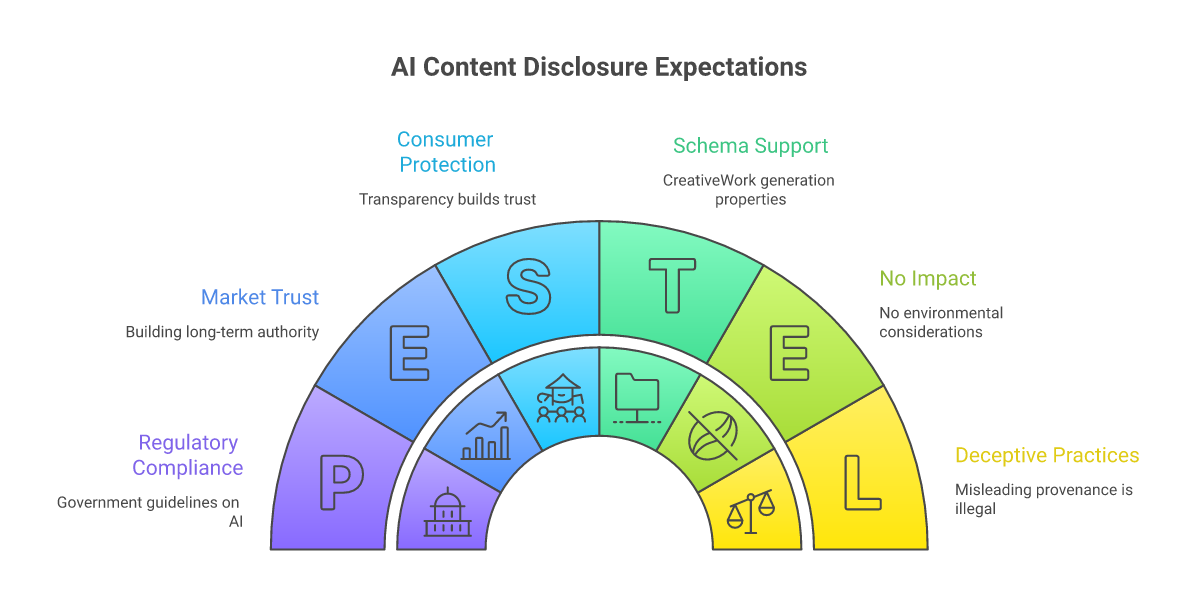

Why disclosure matters under Canadian law

The Competition Bureau treats misleading representations under the Competition Act broadly, and "the general impression" test means a reasonable consumer's impression of authorship matters. A blog post presented as expert-authored that's substantially AI-generated, without disclosure, runs into this. Quebec's Consumer Protection Act adds another layer for content directed at Quebec residents. Neither regime has produced a marquee AI-content enforcement case yet, but the policy direction is unambiguous — see the Competition Bureau's digital-economy guidance materials for the framing.

Schema.org now supports provenance disclosure

Schema.org's CreativeWork now supports `creativeWorkStatus` and related properties that let publishers programmatically disclose AI involvement. This signals to Google, AI engines, and increasingly to other consumers what kind of content you're producing. Combined with visible labels, structured-data disclosure is rapidly becoming the publisher norm in 2026.

The trust calculus

Across the Canadian publishers we audit, sites with explicit AI-content policies and visible labels on materially AI-produced content earn higher engagement on the surrounding human-authored content than sites that lump everything together silently. The mechanism appears to be that disclosure works as a quality signal — readers trust the un-labelled content more when they can see that labelling is happening at all. Editorial teams that resist disclosure for "consistency" reasons typically discover the engagement cost when they audit it.

What to disclose, and how

The practical practice that holds up to both regulatory and reader scrutiny: a public AI use policy linked from your About page, visible inline labels on materially AI-produced articles or images, and structured data on the page. The labels don't need to be heavy-handed — "Image generated with AI" or "This article was drafted with AI assistance and reviewed by [editor]" is enough. Treat it the way newsrooms have long treated photo credits.

Where this aligns with E-E-A-T

Google's E-E-A-T framework rewards transparency about content production. AI engines that cite content increasingly deprioritize sources whose authorship can't be verified. Publishers who disclose well aren't penalized — they're advantaged in citation pipelines. Canadian agencies publishing client content on behalf of brands should be transparent about AI involvement in client work too; this is a direct application of our Honest Representation standard.

Published by CanadianInternetMarketingAssociation.com on 10 May 2026. General guidance, not legal advice — consult counsel for specific compliance questions.

Keep reading

Browse the full blog index, jump to our resources, or look up terms in the glossary.